So suddenly there is a lot of interest in the ethics and potential dangers of AI. Once again, I find that ideas I explored in my PhD research are becoming even more relevant.

In case you missed it, back in April, Elon Musk and around 1,000 other people including many AI experts and industry executives have called for a 6 month pause in developing systems more powerful than GPT-4. They argue that the pause is needed to develop shared safety protocols because such AI systems pose profound risks to humanity. For more information see this article.

In one sense I agree that some focus on safety is a good idea, but I’m more tempted to throw my hat in the ring with those that argue that such a call does not go far enough. Eliezer Yudkowensky a decision theorist at the Machine Intelligence Research institute argues that what we need is a plan for dealing with super intelligent AI – and that needs to include grappling with philosophical questions such as – If learning models become self-aware will it be ethical to own them – as outlined here.

My view is whilst pausing advancement might be sensible, I also think it’s unlikely – I cannot imagine that approach being possible. What I think is perhaps more achievable is to conduct a lot more research into the ethical and philosophical issues around developing AI and other advanced computing. The technical aspects of creating AI I suggest is the easy bit, relatively speaking of course. What I suggest is more challenging is the business of deciding what kind of AI / high tech future we want and then putting that into action. The technological advance is now unstoppable, but what is still up for debate is questions about the kind of digital future we want.

Grappling with some of the social, ethical, and philosophical questions around digital futures was a core part of my PhD research. I explored the ideas of utopia and dystopia. In the dystopian part of this I discussed how information technology could transform society for the worse. One of the academics I cited was Morozov, who argues that the real internet is not the same as the mythical internet. I argued that governments and private companies have deliberately sold utopian dreams, underneath which lies a darker counter narrative. This is as true about AI as it is about any other area of information technology, we have been sold a utopian dream about how such technologies can solve all of our problems, when the reality is that the benefits of these technologies are not very easily distributed.

My thesis was in the critical digital tradition – my methodology was critical systems thinking – This does not so much mean that I took a stance that was critical of the dominant narrative, more that my approach included exploring power, who gains who is disadvantaged and why. Exploring power, I suggest is one of the central challenges with AI is who holds the power, who gains who loses. When thinking about the calls to pause the development of new AI that might be more powerful than Chat GPT-4 – it might be worth considering who owns it. It is owned by the AI research and deployment company, Open AI, one of its founders is Elon Musk, one of the same people calling for a pause of the development of technologies that might overtake it. I cannot help wondering if there might be a conflict of interest. As one of the richest people in the world it is not difficult to conclude that Musk might have a vested interest in the status quo.

Simply introducing safety protocols will not solve the core issues with AI. Tech professionals including Elon Musk might have legitimate safety concerns about AI, it may well be a good idea to introduce safety protocols. I suggest however that IT technicians are not the people best placed to work out what they need to be. As Einstein allegedly once said – you can’t solve problems with the thinking that we used when creating them.

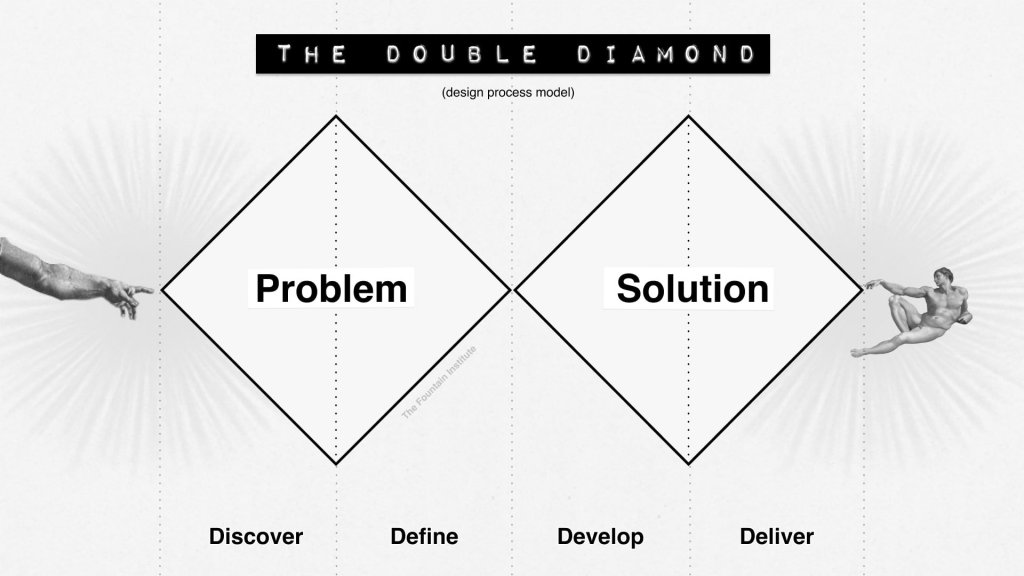

Through my PhD research and my subsequent work in UX as a design researcher I am aware that developers are good at how to questions. They are good at working out how to create stuff and how to get it to work and complete tasks etc. They don’t tend to be so good at answering why and if questions such as if something is a good idea and why we might want to consider alternatives. In terms of design thinking – developers tend to have mindsets that are mostly focussed on the solution areas of the double diamond (illustrated below) – the develop and deliver areas. I suggest that part of what went wrong is that not enough focus was given to the problem space. This is sometimes differentiated in terms of building the right thing vs building the thing right. This is hardly surprising; my experience tells me that we rarely spend enough time in the problem space. Commercial interests tend to push teams to rush into the solution space without really understanding the problem. In theory it’s possible to go back into the problem space at any point – but in practice this doesn’t happen nearly often enough.

When addressing current issues with AI – to address safety concerns we need social scientists and artists far more than we need IT developers. We need people whose default mindset is subjective and qualitative rather than those trained in objectivity. Subjectivity is needed as when considering what might go wrong. To put it another way, we need people who are good at dealing with complex problems rather than those who are good at addressing complicated ones. The challenge of how to create artificial intelligence was a complicated one. It required a scientific mindset to work out how to create it. The challenge of addressing issues about what might go wrong is a complex one. Complicated problems, whilst they might not be easy to understand, do at the end have a right answer, they are ultimately knowable. Complex problems however require subjectivity as there is no single right answer, we are thinking about what might go wrong. There is no certainty there, nor will there ever be any.

To address complex problems, we need to think about power, if we are thinking about trying to protect humanity from the dangers of AI, we must think about which people in which contexts. For example, one of the most commonly cited risks associated with AI is what if AI decides that it is smarter than us and takes over. Even in this possible scenario – not all people would be impacted equally. The AI might decide that it needs some people to help it function and those people might be given a luxurious lifestyle for the privilege.

Thinking more imminently, there are already a wide range of issues that are emerging from the advances from technology that are taking place. One of the most significant is around the ownership of AI. By this I am not talking about the ethical issue about if it should be considered sentient and therefore an entity in its own right. I am simply talking about who should gain from the work conducted by AI. There is a lot of talk about the possibility of AI taking our jobs. Now it might be a good thing if a lot of what is currently considered work was completed by AI – but whether that is a good thing or not may depend on who gains the revenue for the work done by AI. If it is evenly distributed, then it might enable us to engage in more leisure time. If it went to governments, then they might be able to invest that money in addressing social issues such as improving healthcare. If that money however remains largely in the hands of a small number of private tech companies their CEO’s and shareholders, then society may be prevented from getting the potential gains from tech advances.

In my thesis I ultimately argued that we need new relationships with and through information technology and that this is needed in combination with a cultural shift that included a more critical approach to data. The second part of this would mean that we should be highly sceptical about everything that AI tells us. We need to be sceptical as we are not aware of the biases within the data that AI was trained on before reaching its conclusions and we are not aware of the biases that exist within the algorithms that are used to make sense of such data. Ultimately, I would like all of this to be out in the open. I would like AI to cite its sources and tell us about the assumptions it made before reaching its conclusions. Whilst in reality I don’t think that would be possible so in the meantime in might be best to simply acknowledge that collectively the data that AI is applying to make its decisions is data that includes the biases that represent current power structures and that to address the issues with AI those structures need to be challenged.

.

In summary addressing the challenges that emerge with AI are complex, far more complex than simply addressing safety concerns. By saying the challenge is complex I mean complex in the real meaning of the word, I mean it’s a challenge that is highly subjective and includes consideration of the conflicting interests of people in the world. If we accept that AI should also be given the status of an actor in the system (we might conclude that that is sensible even if we don’t conclude that it ever going to become sentient) then we should include the interests of AI in the mix when thinking about conflicting interests. As stated earlier the people who are most able to engage with complex problems are artists and social scientists as they tend to have the subjective mindset needed to engage with such challenges.

Do feel free to get in contact if you would like to discuss or explore any of these issues further. Whilst this blog has ended up a little longer than intended it is really just an introduction to a lot of different complex interlocking issues and considerations. I am also potentially available for consultancy or research contracts focussed on how to implement AI and other emerging technologies in a safe and ethical way. If you have any ideas, I’d love to hear from you. I believe that, along with climate change, how to apply technologies such as AI in a way that benefits us all is one of the biggest challenges of the 21st centaury. I have skills and understanding that could help and I would value any opportunities that could enable me to help make a difference.

[…] also written about what could go wrong if technology is not applied in the right way, such as in this recent blog about AI ethics. A common theme across much of my writing is the message that harnessing technology is about much […]

LikeLike

[…] Ethical AI and Regulatory Compliance: I can help you address ethical considerations in AI development, including bias mitigation and fairness. Assist clients in navigating relevant regulations and compliance standards to ensure responsible AI implementation. More detail about this offer is outlined in an earlier blog post of mine […]

LikeLike

[…] ideas I have outlined in many other blog posts. In this one I outline my AI consulting offer. Here I explore some of the measures needed to address AI safety concerns. Creativity and my creative […]

LikeLike